This text is from the Celsius Wiki, which was published between 2015 and 2018.

The increase of social media, streaming services and cloud data storage have led to an increase in the number of datacentres for data storage on servers. These datacentres are rather energy demanding and require intensive cooling. This in turn produces a great deal of excess heat that must be cooled. This heat can serve as a heat source for district heating systems.

Computers and servers need a high-quality environment within the optimal temperature and humidity range to function properly. To achieve a satisfactory environment, systems that ventilate, cool, humidify and dehumidify the environment are necessary. These systems require energy, and data centres usually have a very high energy density. Data centres can consume up to 100 or even 200 times as much electricity as standard office spaces do (U.S. Department of Energy. Federal Energy Management Program, 2011). It has been estimated that data centres used about 350 TWh electricity globally in 2010, just over 1 % of the world’s total electricity use, and the use is constantly growing (Koomey, 2011). Increasing the energy efficiency of data centres can be achieved by, among other methods, recovering the waste heat in a district heating system. Best practices for waste heat recovery, as well as energy efficiency more generally, are described below.

Strategies to improve energy efficiency in data centres

There is a desire to optimize the availability, efficiency and capacity of a data centre. All these three components must work together for the end result to be as optimal as possible. By doing so, both energy and money can be saved. In order to achieve a well performing data centre with as low energy use as possible, actors can work with the aspects summarised here. More details are given in the sections below.

1. Geographical orientation

Before deciding where to place the data centre, the geographical orientation should be considered. Where it is most suitable to place a new data centre depends on several aspects; such as the possibility to use free cooling, the reliability of the electricity power distribution system, the energy source for the electricity and the possibility to utilize the excess heat. A geographic location with access to free cooling might yield low energy use for cooling, while placing the data centre in a warmer and denser populated area allows the possibility for both a closer relationship with the customers and to utilize and sell the surplus energy. It is difficult to provide a general answer to which of the alternatives that is the most suitable – this must be evaluated in each case, with the specific circumstances in mind.

2. Invest in IT-equipment with high performance

IT equipment should have a high efficiency both on full and half speed, in order to require as little energy as possible to achieve a certain task.

3. Measure and follow up energy use

In order to find out where the efforts of energy optimization should be made, it is necessary to measure the energy used for different purposes; IT equipment, fans, pumps, cooling, lighting, etc. This can be done by measuring the electricity usage and calculate different key performance indicators, such as PUE – Power Usage Effectiveness. A better, more suitable indicator would be one that takes into consideration both the internal and external delivery system, use of renewable energy, energy reuse and other factors which together give a better overview.

4. Manage airflow inside the data centre

To minimize the cooling needed, the air should be distributed in cold aisles, flow through the hot servers and later be evacuated from the hot aisles. The mixing of these air flows should be minimized to obtain low energy use. This could be done by using well-designed containment, locating hot spots and evening out the temperature.

5. Optimize free cooling

When possible, use free cooling (outdoor air, seawater, evaporating water or similar) to cool the data centre. It is usually much cheaper than using cooling produced by cooling machines or similar solutions. Sometimes it might be possible to increase the temperature in the cold aisles without risking overheating the IT-equipment. This can make it possible to utilize free cooling for the greater parts of the year, which in turn reduces the energy usage and cost.

6. Heat recovery

All the electricity input to the IT-equipment turns into heat, and the heat needs to be cooled down for the equipment to function properly. Usually this heat is cooled off in cooling towers or something similar, instead of being utilized. If there is a heating demand in other parts of the building, neighbouring buildings or a district heating network that can take care of the heat, this heat can be used instead of wasted. The temperature of the waste heat is often low, but if higher temperatures are needed a heat pump can be used to raise the temperature.

7. Optimize power distribution

Much energy is lost in the conversion steps between AC and DC. These losses can be reduced by eliminating as many steps as possible. Another source that has large losses is that of UPSs, and because of this it is important to select a high-efficiency model.

8. Produce electricity

It is also possible to produce electricity on top of the building, for example with solar cells. This doesn’t reduce the energy usage, but it can contribute to the electricity supply and can replace other sources of electricity.

Best available technology

ASHRAE’s “Thermal Guidelines for Data Processing Environments” recommends a temperature range of 18 – 27 °C, a dew point range of 5.5 – 15 °C and a maximum relative humidity of 60 % (ASHRAE TC 9.9, 2011). Too high temperatures will lead to overheating of components and malfunctions, while too low temperature or too high humidity will lead to condensation on internal components and to short circuits. Low humidity leads to static electricity discharge problems which might damage the equipment. It is therefore essential that a correct operating environment is maintained, but the associated processes are energy intensive.

There are different ways to reduce the energy use. The first thing to do is to invest in energy efficient computer systems. With efficient equipment, less electricity is demanded for the same amount of work. Less electricity use for the computer systems, give a double win since it also produces less excess heat and therefore less energy is demanded to cool off the facility. The design of the data centre and how the cooling is produced is important for the energy efficiency of a data centre. Another way to increase the efficiency is to use the excess heat and recover it. Production of electricity is an additional way to reduce the purchased energy.

Geographical location

The geographical location of a data centre is a very important issue. It sets the limits and provides conditions for different aspects; such as cooling methods, heat recovery, electricity power delivery and source of the electricity. The geographical location and the climate conditions determines for how much of the year that free cooling can be used, which is further discussed under Cooling production further down. The larger the part of the year that free cooling can be used, the less time another type of cooling is needed, which might lower the costs for cooling.

Another issue that depends on the geographical location is if it’s possible to make use of the excess heat. In areas that are densely populated and where a demand for heat is necessary, it might be possible to use the surplus in neighbouring buildings or in a district heating system. A densely populated area also provides the possibility of a closer relationship with the customers using the data centre, which can be a favourable aspect for many data centres. More information on this topic can be found under Heat recovery below.

The placement of the data centre dictates what electricity sources are available. A high reliability of the electricity grid is beneficial, since it can reduce the need for an electricity back-up system. This can in turn reduce the costs for purchase, installation and maintenance of the data centre.

Design

All the electricity that is used by IT equipment in a data centre is converted to heat that must be cooled off. With more efficient IT equipment, the electricity use for the same amount of work is reduced. In turn, this results in a lower cooling demand. By designing the data centre in an optimal way, the best conditions are provided for a low cooling demand. A data centre usually has a raised floor, in which air can be circulated and provide cooling where necessary. Computer cabinets are often organized in hot and cold aisles to maximize the airflow efficiency.

Cooling production

To cool the equipment in a data centre, air is most commonly used. The heated air from the equipment is led to a heat exchanger where the air is cooled and then recirculated to the room. The air can also be directly led to the surroundings and replaced by outdoor air. If a heat exchanger is used, cold can be achieved by for example outdoor air, cooling machines, dry coolers or cooling towers.

The geographical site where the data centre is placed determines the conditions for which cooling source is required. In areas that have a favourable climate, free cooling can be used during much time of the year. Outdoor air is the most common source for free cooling in data centres, but other sources of free cooling are possible -, such as water from rivers, lakes, seas and similar sources.

Most common is that the outdoor air is used to cool the recirculating air inside the building by means of a heat exchanger. The outdoor air can often be used even if it doesn’t directly meet the requirements; by treating the outdoor air with evaporative cooling, de-humidification or humidification, until the properties of the air are suitable. New IT equipment can handle higher temperatures, which might make it possible to use free cooling during larger parts of the year.

An additional cooling system is needed during the days when free cooling is not sufficient. This can be done in several different ways; for example with electrical cooling machines, district cooling or absorption cooling. Which additional cooling technique should be used depends on the cooling demand and site-specific conditions.

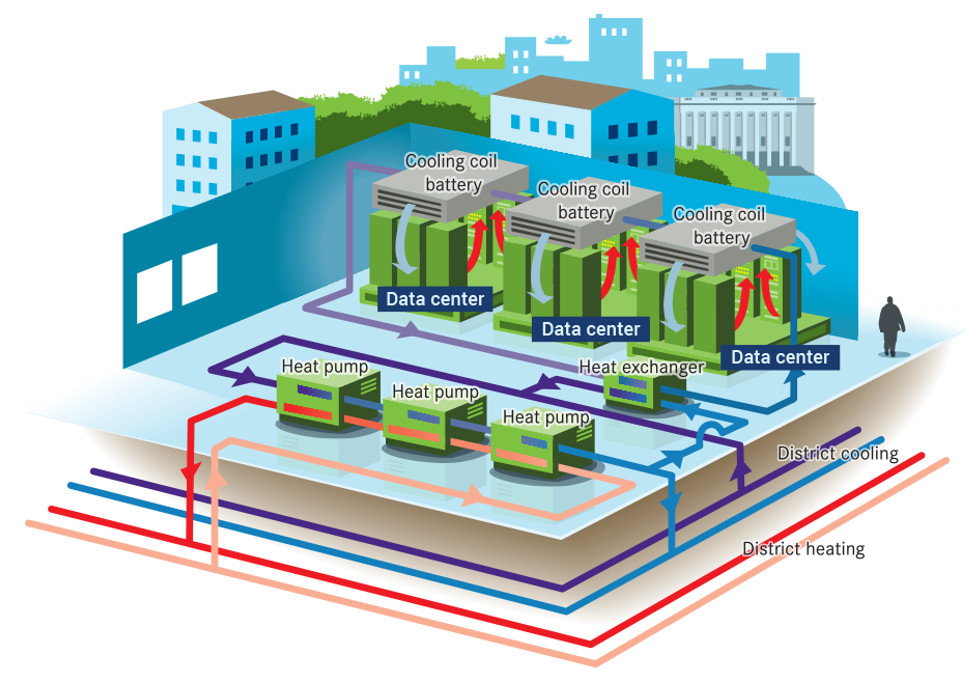

Heat recovery

In many existing data centres, the heat that all IT equipment generates is cooled off or removed, instead of being used. Since the energy needed to cool off the IT equipment is very high in a data centre, there are large economic and environmental gains to be made by utilizing the excess heat (Sjöström, 2013). The air leaving the hot aisles often has temperatures of about 25 – 40 °C, and there are different ways to recover the surplus. The feasibility of the chosen method depends on the prevailing conditions for the data centre. The excess heat can be used in other parts of the building or near-by buildings with a heating need. If there is a district heating or cooling network nearby, it could be possible to transfer the energy to the network instead (Lindfors, 2014; Rad, 2008).

To be able to use the surplus energy from the data centre in the district heating network, it needs to be converted into a useful form. Depending on the temperature of the excess heat, the heat can be transferred to the district heating network’s supply or return side. If the temperature of the excess heat is low, it might also be used in a low-temperature district heating system, or even in the district cooling network.

For the district heating company, it is usually preferable to transfer the heat to the supply side of the district heating network, where it can be used directly by their customers. The district heating company can then view the excess heat from the data centre as an additional production plant in their distribution network. In order to transfer the excess heat to the supply side of the district heating network, the temperature needs to be raised. The air from the hot aisles in the data centre is first passed through a heat exchanger where the heat is transferred to water. Then the temperature of the water is raised by a heat pump, before the heat can be transferred to the district heating network. The temperature level required for the supply side depends on the season and the specific conditions in each district heating network. Examples of temperatures in Fortum’s district heating network in Stockholm are presented later in the text, under Heat recovery: best practice.

When transferring the heat to the return side of the district heating network, the temperature of the waste heat doesn’t need to be raised very high. Examples of temperatures needed in Stockholm Exergi’s (former Fortum) district heating network is discussed under Heat recovery: best practice. The addition of the excess heat to the district heating network enables a lower temperature to increase of the district heating company, before the district heating is distributed out again. If the heat in the district heating network is produced in a CHP plant, the electricity production will be lowered, since the return water in the network is used to condensate the vapour in the CHP. A higher return temperature, consequently, leads to a lower production of electricity.

The cooling of the data centre can also be done by district cooling, if there is such a network available. This can be particularly suitable if there is a need to raise the temperature of the return side of the district cooling network. This heat can be transferred to the district heating supply side by using heat pumps.

To investigate whether it’s possible (and economical) to connect the data centre to the district heating network (or the district cooling network, it is necessary to study the specific conditions in each case. Factors that need to be considered are, for example, the distance between the data centre and the existing district heating network, the amount of excess heat that can be transferred to the district heating network and ground conditions. A short distance between the data centre and the existing district heating network is preferable, since the costs for new distribution ducts are considerable (Lindfors, 2014). Another factor that plays a major role is what the power company is willing to pay for the excess heat. Different pricing models can be used for this. In Stockholm, Fortum has a pricing model that depends on the energy demand in the network. This results in a low price when the demand is low, and a higher price when the demand is higher.

Use of electricity for cooling systems

The fans that move the cooling air use electricity, even if the cooling energy from the outdoor air is “free”. Fans with high efficiency and low electricity consumption should be used. Fans with a variable-frequency drive should be used, since that results in the lowest energy use. Fans used in cooling towers may advantageously be driven with variable-frequency so that all fans are running at the same time but at a lower speed, instead of fans run one by one in sequence. This results in lower total energy usage. Even pumps use electricity, and pumps with a high efficiency should be used. It is also important to use pumps properly sized for the operating point/s.

Tools for evaluating data centres’ energy efficiency

To evaluate the energy performance of a data centre, the first step is to find out how and where the energy is used within the data centre. Based on measurement data, different key performance indicators can be calculated, which in turn can be compared with established reference intervals. This can give a hint of the performance of the data centre and give a direction for where to focus the energy efficiency efforts. Some useful key performance indicators are discussed below.

Key performance indicators

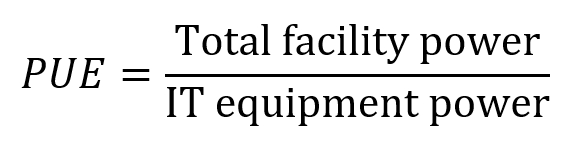

The most frequently used key performance indicator to determine the energy efficiency of a data centre is power usage effectiveness, or PUE. PUE describes how much energy is used by the computing equipment compared to the total power used by the data centre (Rad, 2008). A lower number indicates a higher efficiency.

The total facility power consists of both power to IT equipment and power to support equipment, i.e. HVAC systems, power back-up systems (UPS) and other facility infrastructure, like lighting.

The PUE factor is widely used to provide a measure of the effectiveness of a data centre, but it has several limitations. PUE is most appropriate to use for facilities with their own cooling production, such as cooling machines. It is not possible to use PUE compare facilities with externally produced cooling and it doesn’t take into consideration whether the excess heat is recovered. Instead, if surplus energy is recovered the PUE number is often higher, which indicates a poorer effectiveness, while heat recovery in a larger system perspective yields lowered energy usage and a reduction of emissions. There are ongoing efforts to develop more sophisticated measures that take into account both the internal and external delivery system, use of renewable energy, energy reuse and other factors which provides a better overview (Emerson Network Power, 2011).

There are also several other key performance indicators that can be used to evaluate parts of the facility. A few of them, including PUE, along with their reference values are listed in the table below.

A list of key performance indicators useful when evaluating the performance of a data centre (U.S. Department of Energy. Federal Energy Management Program, 2011)

Best practice

There are about 500 000 data centres around the world (Emerson Network Power, 2011). Some of them either have especially low energy usage, produce electricity with solar cells or make use of surplus energy. A few examples of these are presented in the following sections.

Low energy use

Since the data centres use a large amount of electricity to run the IT-equipment and the equipment used for cooling, lighting, etc., it is important to use available technology to reduce the energy demand. Facebook and Google have several data centres around the world – below there are descriptions of how they work to reduce the energy demand in their data centres.

Currently, Facebook is the world’s most popular web site, and Facebook alone accounts for about nine percent of all Internet traffic (slightly more than Google). Facebook has several data centres in the United States and one located in the north of Europe; in Luleå, Sweden (Data Center Knowledge, 2014). Once it is built, the data centre in Luleå will be 84,000 m² and have an energy usage of about 1 TWh per year. This can be compared with the total electricity use for the Swedish industry, which is 55 TWh (Computer Sweden, 2014).

The high reliability of the power grid in this particular location has made it possible to reduce the number of backup generators by 70 %, which reduces the local environmental impact in several ways (Emerson Network Power, 2011). A smaller storage of diesel fuel is needed, and less emission is emitted from generator testing.

The cooling system uses free cooling when the outdoor temperature is low enough; this is a large part of the year due to the cold weather. The outdoor air is filtered and adjusted in relative humidity before entering the data centre (Vance, 2013). Green electricity from hydropower is used to drive the air fans and to power all other equipment in the data centre. In Facebook’s data centres in the U.S., mainly electricity from coal plants is used (Eriksson, 2013). In total, the PUE for Facebook’s data centre is 1.06. According to Facebook the facility is 38 % more efficient compared to the rest of the business ( Data Center Knowledge, 2014).

Google has 13 data centres in total around the world (Google. Data center locations, u.d.). The energy usage in all of Googles data centres is about 2,260 GWh (year 2010) (Data Revealed: Google Uses More Power than Salt Lake City, 2011). In average their data centres have a PUE of 1.12, reported on a comprehensive trailing twelve-month (data from Q3 2014). To reduce the energy usage and get a low PUE, Google works with several aspects of the data centre (Google. Efficiency: How we do it. , 2019). Some of these are presented below.

First of all, Google use servers that are designed to use as little energy as possible. Unnecessary parts are removed, efficient power supplies are used and it is ensured that the servers use little energy while not performing any work or operating at part load. Further, two AC/DC conversion stages are removed by putting the back-up batteries directly on the server racks. This is estimated to save about 25 % over a typical system. When the equipment needs to be replaced, it is reused or recycled if possible (Data Revealed: Google Uses More Power than Salt Lake City, 2011).

To cool the IT-equipment, the temperature and airflow in the data centres and machines are managed in an optimal way. Hot spots in the data centre are located using thermal modelling, and the ambient temperature in the building is evened out by moving the computer room air conditioners to where they are needed the most. The temperature in the cold aisles are raised to about 21 °C or higher to make it possible to use free cooling during larger parts of the year, which reduces the need and energy use for cooling machines. Further, the air in hot aisles behind the server racks are prevented from mixing with the air in the cold aisles in front of the server racks. In order to handle this, appropriate ducting and permanent enclosures are used. Furthermore, blanking panels and plastic curtains are used to close off empty rack slots and seal off the cold aisles (Data Revealed: Google Uses More Power than Salt Lake City, 2011).

Google often uses hydronic cooling systems in its buildings, instead of air conditioning units. They’ve designed a cooling system called “hot huts”. When the hot air leaves the servers, it is sealed inside the hot huts. Then fans on top of each hot hut then drives the hot air through water cooled coils. The air is then cooled and can be used to cool the servers again and complete the cycle. The water used in the data centres in the hot huts is often cooled by evaporative cooling. In its facility in Finland, Google uses sea water instead (Data Revealed: Google Uses More Power than Salt Lake City, 2011).

Heat recovery: best practice

In Stockholm, Sweden, Stockholm Exergi (former Fortum) has launched a pilot project with an open district heating network. In practice this means that a building that has a surplus of energy can sell the heat to the district heating network. The surplus energy can for example be delivered from a data centre, a grocery store or an office building. When the excess heat from the data centre is recovered, it replaces primary energy in the existing production plants in the district heating network. According to Stockholm Exergi, this provides several benefits, both for the district heating company and the one that provide and sell the surplus energy. One benefit for Stockholm Exergi is lower production costs when incoming deliveries replace more expensive production. Benefits for the data centre (or another building with surplus energy) are incomes from sales of energy that otherwise would be lost, a higher utilization of the cooling system and a possibility for greater reliability in the system thanks to redundancy (Fortum, 2015).

In Stockholm there are two data centres that are delivering excess heat (and cooling) to Fortum: Bahnhof Thule and Bahnhof Pionen.

Bahnhof Thule

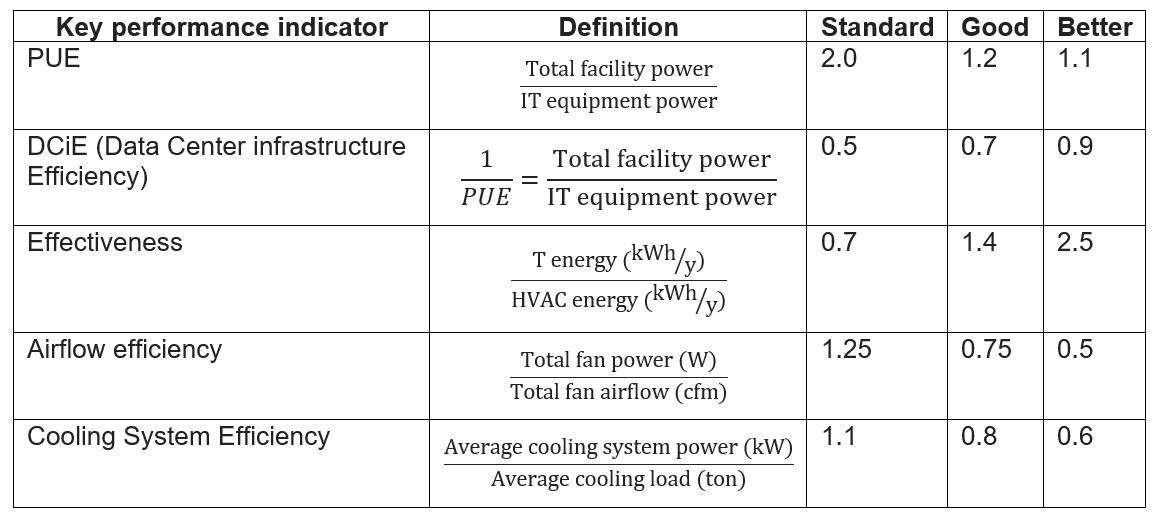

The data centre Bahnhof Thule, situated in the centre of Stockholm, consists of three data halls. The cooling system consists of three heat pumps connected in a series, see Figure 1.

Figure 1. A sketch of the heat pump system at the data centre Bahnhof Thule and connections to district heating and cooling network (Fortum, 2014).

At normal operation, the heat pumps use the district cooling network’s return line and cools it down to the desired temperature for use in the data centre. The heat produced is delivered to the district heating supply line, thus making the district heating network the heat sink for the heat pump. The total cooling capacity is approximately 1,200 kW when district cooling at 5.5 °C and district heating at 68 °C is delivered. The heating effect corresponds to approximately 1,600 kW. Temperatures of 65 °C is needed in the supply line at outdoor temperatures down to 0 °C, and at lower outdoor temperatures higher supply temperatures are needed, up to 100 °C when -18 °C outside. The excess heat is transferred to the supply side, even when the outdoor temperature is low and a higher temperature than 68 °C is required in the district heating network. This is possible since the data centre is located where the supply temperature isn’t crucial, and the heat delivery from the data centre is relatively small compared to the total heat flow in the district heating line (Lindfors, 2014).

The system at Bahnhof Thule can operate without district cooling if there are interruptions in the delivery of district cooling. When the outdoor temperature is at least 20 °C the plant is producing cooling at full capacity, and the surplus cooling energy is used in the network system of other district cooling customers (Fortum, 2014). That is how it is regulated in the agreement between Fortum and Bahnhof. When the outdoor temperature is below 20 °C and there is a cooling demand, the cooling machines run at a lower power so that they cover Bahnhof Thules’s need for cooling (Fortum, 2014).

The economic compensation from the utility company for the heat delivery from Bahnhof varies depending on the outdoor temperature,. During a cold day in the winter season the heat delivered can be worth ten times more than on a normal day during the summer season. Therefore, the excess heat from the heat pumps is being used in the district heating network mainly when the outside temperature is below 7 °C, which is about half the year. Bahnhof always has the right to deliver heat to Stockholm Exergi, and there is always a need for heat in the district heating network. But the price for the heat is correlated to the demand (Fortum, 2014).

Bahnhof has in total invested 5.3 MSEK in the cooling system, which includes three heat pumps (that meet pressure class PN16 on the condenser side, which makes it possible to connect them directly to the district heating distribution network), pipes, wiring, control systems, data collection and installation. Stockholm Exergi has invested 2.6 MSEK in the new delivery pipes for district heating and cooling.

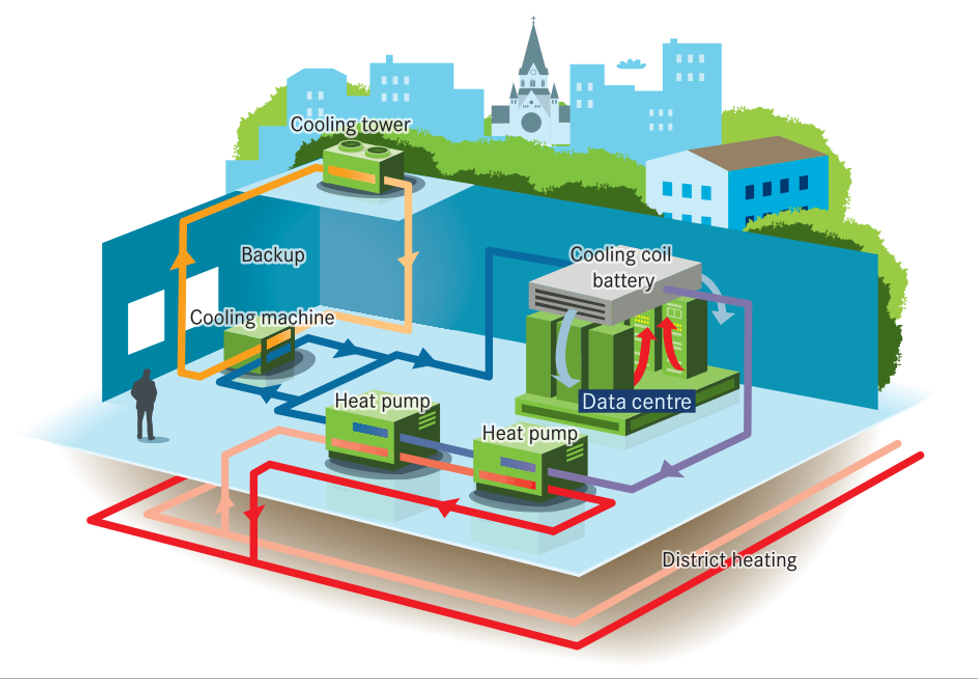

Bahnhof Pionen

Another data centre in Stockholm is Bahnhof Pionen. When built, in 2007, it had a conventional cooling system where the excess heat from the cooling machines was cooled off to the outside air. But now the excess heat is being used in the district heating network instead. This is made possible by heat pumps connected in a series and a new connection pipe between Bahnhof Thule and Stockholm Exergi’s district heating network (Fortum, 2014). The system can be seen in Figure 2.

Figure 2. A sketch of the heat pump system at the data centre Bahnhof Pionen and connections to the district heating network (Fortum, 2014).

The heat pumps have a cooling and heating power of 694 and 975 kW respectively. The system is controlled by regulating the temperature on both the cold and the hot side. If the cold side can’t deliver the right temperature, the backup system is started. The backup system consists of the old cooling machines (Fortum, 2014).

The heat pumps meet pressure class PN16 on the condenser side, which makes it possible to connect them directly to the district heating distribution network. The new distribution pipe that connects the data centre with the existing district heating network is 67 metres long (DN125). In normal operation, the heat delivery is about 600 kW with a temperature of 68 °C (Fortum, 2014).

Bahnhof has invested 3.4 MSEK in the new cooling system in Pionen, while Stockholm Exergi has invested 1.3 MSEK. This includes costs for heat pumps, pipes, hot tapping, wires, control systems, insulation, installation, and a new delivery pipe to the existing district heating network respectively (Fortum, 2014).

Stockholm Exergi is confident that they have made a good investment and they value the close relationship with the customers. The sustainability aspects are important since more and more of the customers demand the production to be sustainable and environmentally friendly (Fortum, 2014).

Production of electricity

In St. Louis, Missouri, Emerson has a data centre with solar cells covering the roof. The solar cells have an area of 7,800 m² and a total power of 100 kW. The production from the solar cells contributes with about 16 % of the total electricity use of the data centre. The system consists of 550 solar panels and a 95 kW DC-to-AC photovoltaic inverter (Alpha Energy, 2015).

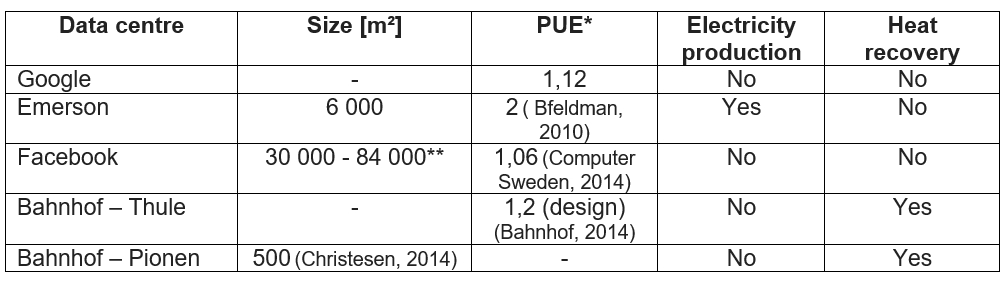

Summary of facts for data centres; Google, Emerson, Facebook, Bahnhof Thule and Bahnhof Pionen. *) The PUE doesn’t take into consideration e.g. how the electricity used is produced or if the excess heat is recovered, giving Bahnhof Thule and Pionen a disadvantage compared to the other data centres in the list.

*) The PUE doesn’t take into consideration e.g. how the electricity used is produced or if the excess heat is recovered, giving Bahnhof Thule and Pionen a disadvantage compared to the other data centres in the list.

**) When fully expanded

Apart from solar cells, the data centre incorporates the strategies and technologies in Emerson Network Power’s Energy Logic roadmap to reduce the energy use. This roadmap focuses on efficiency of the IT systems, which in turn gives a cascade effect on overall energy usage since the cooling demand decreases. The data centre should also be constructed so the energy usage changes with demand (Emerson Network power, 2014).

References:

- Bfeldman. (den 11 October 2010). AFCOM conference, a question of Power Usage Effectiveness. Retrieved from https://www.fox-arch.com/afcom-conference-power-usage-effectiveness/

- Data Center Knowledge. (2014). Retrieved from The Facebook Data Center FAQ: https://www.datacenterknowledge.com/data-center-faqs/facebook-data-center-faq

- Alpha Energy. (2015). Grid-Tied Solar Power System.

- ASHRAE TC 9.9. (2011). Thermal Guidelines for Data Processing Environments – Expanded Data Center Classes and Usage Guidance. U.S. Department of Energy.

- Bahnhof. (2014). Bahnhof Thule Teknisk specifikation. Retrieved from Bahnhof: https://www.bahnhof.se/filestorage/userfiles/file/Thule%20teknisk%20broschyr.pdf

- Christesen, S. (2014). Atomsikret datacenter.

- Computer Sweden. (2014). Facebook slår upp portarna i Luleå (in Swedish). Retrieved from https://computersweden.idg.se/2.2683/1.512158/facebook-slar-upp-portarna-i-lulea

- Data Revealed: Google Uses More Power than Salt Lake City. (September 9, 2011). Retrieved from Environmental leader: https://www.environmentalleader.com/2011/09/google-reveals-electricity-use-breaking-its-silence/

- Emerson Network Power. (2011). State of the Data Center.

- Emerson Network power. (2014). Energy Logic 2.0 – New Strategies for Cutting Data Center Energy Costs and Boosting Capacity.

- Eriksson, A. (den 12 June 2013). Så mycket el drar Facebook i Luleå (Swedish). Retrieved from Svenska Dagbladet: https://www.svd.se/sa-mycket-el-drar-facebook-i-lulea

- Fortum. (2014). Bahnhof Pionen. Lönsam återvinning med Öppen Fjärrvärme. Retrieved from Öppen Fjärrvärme: http://www.oppenfjarrvarme.se/media/k3-Brosch-160×160-Pionen-15okt2014.pdf

- Fortum. (2014). Fortum Öppen Fjärrvärme. Bahnhof Thule Värme från datahallen, intäkt istället för kostnad. . Retrieved from Öppen Fjärrvärme: https://www.oppenfjarrvarme.se/referens/bahnhof-thule/

- Fortum. (2014). Öppen Fjärrvärme. Bahnhof Pionen Lönsam värmeåtervinning för datahallar. Retrieved from Öppen Fjärrvärme: https://www.oppenfjarrvarme.se/referens/bahnhof-pionen/

- Fortum. (den 15 October 2015). Bahnhof Thule. Lönsam återvinning med Öppen Fjärrvärme. Retrieved from Öppen Fjärrvärme: http://www.oppenfjarrvarme.se/media/k4-Brosch-160×160-Thule-15okt2014.pdf

- Google. Data center locations. (u.d.). Retrieved from https://www.google.com/about/datacenters/locations/

- Google. Efficiency: How we do it. . (2019). Retrieved from Google: https://www.google.com/about/datacenters/efficiency/

- Koomey, J. (2011). Growth in Data centre electricity use 2005 to 2010. Oakland, CA.

- Lindfors, J. (2014). Key Account Manager, Fortum.

- Rad, P. e. (2008). Best practices for increasing data centre energy efficiency. Dell Power Solutions.

- Sjöström, L. (2013). The use of energy in data centres: energy flows and cooling. Uppsala Universitet.

- U.S. Department of Energy. Federal Energy Management Program. (2011). Best Practices Guide for Energy-Efficient Data Center Design.

- Vance, A. (den 4 October 2013). Inside the Arctic Circle, Where Your Facebook Data Lives. Retrieved from Bloomberg: https://www.bloomberg.com/news/articles/2013-10-04/facebooks-new-data-center-in-sweden-puts-the-heat-on-hardware-makers

External links:

- ReUseHeat

- RESCUE Project